一、Kubernetes简介

Kubernetes(简称K8S)是开源的容器集群管理系统,可以实现容器集群的自动化部署、自动扩缩容、维护等功能。它既是一款容器编排工具,也是全新的基于容器技术的分布式架构领先方案。在Docker技术的基础上,为容器化的应用提供部署运行、资源调度、服务发现和动态伸缩等功能,提高了大规模容器集群管理的便捷性。

K8S集群中有管理节点与工作节点两种类型。管理节点主要负责K8S集群管理,集群中各节点间的信息交互、任务调度,还负责容器、Pod、NameSpaces、PV等生命周期的管理。工作节点主要为容器和Pod提供计算资源,Pod及容器全部运行在工作节点上,工作节点通过kubelet服务与管理节点通信以管理容器的生命周期,并与集群其他节点进行通信。

二、K8s集群部署环境准备

1、环境架构

|

IP |

主机名 |

操作系统 |

Kubelet版本 |

用途 |

|

192.168.2.200 |

k8s-master3 |

CentOS 7.9.2009 |

v1.27.6 |

管理节点 |

|

192.168.2.199 |

k8s-master2 |

CentOS 7.9.2009 |

v1.27.6 |

管理节点 |

|

192.168.2.198 |

k8s-master1 |

CentOS 7.9.2009 |

v1.27.6 |

管理节点 |

|

192.168.2.197 |

k8s-node3 |

CentOS 7.9.2009 |

v1.27.6 |

工作节点 |

|

192.168.2.196 |

k8s-node2 |

CentOS 7.9.2009 |

v1.27.6 |

工作节点 |

|

192.168.2.195 |

k8s-node1 |

CentOS 7.9.2009 |

v1.27.6 |

工作节点 |

2、配置主机名

注:以下操作所有节点需要执行

# Master1

[root@localhost ~]# hostnamectl set-hostname k8s-master1 --static

# Master2

[root@localhost ~]# hostnamectl set-hostname k8s-master2 --static

# Master3

[root@localhost ~]# hostnamectl set-hostname k8s-master3 --static

# Node1

[root@localhost ~]# hostnamectl set-hostname k8s-node1 --static

# Node2

[root@localhost ~]# hostnamectl set-hostname k8s-node2 --static

# Node3

[root@localhost ~]# hostnamectl set-hostname k8s-node3 --static

[root@k8s-master1 ~]# cat >>/etc/hosts <<EOF

192.168.2.200 k8s-master3

192.168.2.199 k8s-master2

192.168.2.198 k8s-master1

192.168.2.197 k8s-node3

192.168.2.196 k8s-node2

192.168.2.195 k8s-node1

EOF

3、关闭防火墙和selinux

[root@k8s-master1 ~]# systemctl stop firewalld.service

[root@k8s-master1 ~]# systemctl disable firewalld.service

[root@k8s-master1 ~]# setenforce 0

[root@k8s-master1 ~]# sed -i "s/SELINUX=enforcing/SELINUX=disabled/g" /etc/selinux/config

4、关闭swap分区

[root@k8s-master1 ~]# swapoff -a

[root@k8s-master1 ~]# sed -i '/swap/s/^/#/g' /etc/fstab

5、配置内核参数和优化

[root@k8s-master1 ~]# cat > /etc/sysctl.d/k8s.conf <<EOF

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

net.ipv4.ip_forward = 1

EOF

[root@k8s-master1 ~]# sysctl --system

6、安装ipset、ipvsadm

[root@k8s-master1 ~]# yum -y install conntrack ipvsadm ipset jq iptables curl sysstat libseccomp wget vim net-tools git

[root@k8s-master1 ~]# cat >/etc/modules-load.d/ipvs.conf <<EOF

# Load IPVS at boot

ip_vs

ip_vs_rr

ip_vs_wrr

ip_vs_sh

nf_conntrack

nf_conntrack_ipv4

EOF

[root@k8s-master1 ~]# systemctl enable --now systemd-modules-load.service

# 确认内核模块加载成功

[root@k8s-master ~]# lsmod |egrep "ip_vs|nf_conntrack_ipv4"

nf_conntrack_ipv4 15053 0

nf_defrag_ipv4 12729 1 nf_conntrack_ipv4

ip_vs_sh 12688 0

ip_vs_wrr 12697 0

ip_vs_rr 12600 0

ip_vs 145458 6 ip_vs_rr,ip_vs_sh,ip_vs_wrr

nf_conntrack 139264 2 ip_vs,nf_conntrack_ipv4

libcrc32c 12644 3 xfs,ip_vs,nf_conntrack

7、安装containerd

1)安装依赖软件包

[root@k8s-master1 ~]# yum -y install yum-utils device-mapper-persistent-data lvm2

2)添加阿里Docker源

[root@k8s-master1 ~]# yum-config-manager --add-repo http://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

3)添加overlay和netfilter模块

[root@k8s-master1 ~]# cat >>/etc/modules-load.d/containerd.conf <<EOF

overlay

br_netfilter

EOF

[root@k8s-master1 ~]# modprobe overlay

[root@k8s-master1 ~]# modprobe br_netfilter

4)安装Containerd,这里安装最新版本

[root@k8s-master1 ~]# yum -y install containerd.io

5)创建Containerd的配置文件

[root@k8s-master1 ~]# mkdir -p /etc/containerd

[root@k8s-master1 ~]# containerd config default > /etc/containerd/config.toml

[root@k8s-master1 ~]# sed -i '/SystemdCgroup/s/false/true/g' /etc/containerd/config.toml

[root@k8s-master1 ~]# sed -i '/sandbox_image/s/registry.k8s.io/registry.aliyuncs.com\/google_containers/g' /etc/containerd/config.toml

6)启动containerd

[root@k8s-master1 ~]# systemctl enable containerd

[root@k8s-master1 ~]# systemctl start containerd

三、安装Nginx+Keepalived

1、环境架构

|

IP地址 |

主机名 |

操作系统 |

VIP |

用途 |

|

192.168.2.201 |

k8s-nginx1 |

CentOS 7.9.2009 |

192.168.2.188 |

Nginx+Keepalived |

|

192.168.2.202 |

k8s-nginx2 |

CentOS 7.9.2009 |

192.168.2.188 |

Nginx+Keepalived |

2、安装Nginx

1)配置主机名

# Nginx1

[root@localhost ~]# hostnamectl set-hostname k8s-nginx1 --static

# Nginx2

[root@localhost ~]# hostnamectl set-hostname k8s-nginx2 --static

1)配置Yum源

[root@k8s-nginx1 ~]# rpm -Uvh http://nginx.org/packages/centos/7/noarch/RPMS/nginx-release-centos-7-0.el7.ngx.noarch.rpm

2)安装Nginx

[root@k8s-nginx1 ~]# yum -y install nginx

3)配置Nginx

[root@k8s-nginx1 ~]# vim /etc/nginx/nginx.conf

user nginx nginx;

worker_processes auto;

pid /var/run/nginx.pid;

events {

use epoll;

worker_connections 10240;

multi_accept on;

}

http {

include mime.types;

default_type application/octet-stream;

log_format json escape=json

'{"访问者IP":"$remote_addr",'

'"访问时间":"$time_iso8601",'

'"访问页面":"$uri",'

'"请求返回时间":"$request_time/S",'

'"请求方法类型":"$request_method",'

'"请求状态":"$status",'

'"请求体大小":"$body_bytes_sent/B",'

'"访问者搭载的系统配置和软件类型":"$http_user_agent",'

'"虚拟服务器IP":"$server_addr"}';

access_log /var/log/nginx/access.log json;

error_log /var/log/nginx/error.log warn;

sendfile on;

tcp_nopush on;

keepalive_timeout 120;

tcp_nodelay on;

server_tokens off;

gzip on;

gzip_min_length 1k;

gzip_buffers 4 64k;

gzip_http_version 1.1;

gzip_comp_level 4;

gzip_types text/plain application/x-javascript text/css application/xml;

gzip_vary on;

client_max_body_size 10m;

client_body_buffer_size 128k;

proxy_connect_timeout 90;

proxy_send_timeout 90;

proxy_buffer_size 4k;

proxy_buffers 4 32k;

proxy_busy_buffers_size 64k;

large_client_header_buffers 4 4k;

client_header_buffer_size 4k;

open_file_cache_valid 30s;

open_file_cache_min_uses 1;

}

stream {

log_format main '$remote_addr $upstream_addr - [$time_local] $status $upstream_bytes_sent';

access_log /var/log/nginx/k8s-access.log main;

upstream k8s-apiserver {

server 192.168.2.198:6443 weight=5 max_fails=3 fail_timeout=30s;

server 192.168.2.199:6443 weight=5 max_fails=3 fail_timeout=30s;

server 192.168.2.200:6443 weight=5 max_fails=3 fail_timeout=30s;

}

server {

listen 6443;

proxy_pass k8s-apiserver;

}

}

4)检测语法

[root@k8s-nginx1 ~]# nginx -t

5)配置开机自启

[root@k8s-nginx1 ~]# systemctl enable nginx

6)启动Nginx

[root@k8s-nginx1 ~]# systemctl start nginx

7)查看监听端口

[root@k8s-nginx1 ~]# netstat -lntup |grep 6443

3、安装Keepalived

1)安装Keepalived

[root@k8s-nginx1 ~]# yum -y install keepalived

[root@k8s-nginx2 ~]# yum -y install keepalived

2)配置Keepalived

[root@k8s-nginx1 ~]# vim /etc/keepalived/keepalived.conf

! Configuration File for keepalived

global_defs {

notification_email {

675583110@qq.com

}

notification_email_from 675583110@qq.com

smtp_server 127.0.0.1

smtp_connect_timeout 30

router_id LVS_DEVEL

}

vrrp_script check_nginx {

script "/data/scripts/check_nginx.sh"

interval 2

weight 2

}

vrrp_instance VI_1 {

state MASTER

interface ens33

virtual_router_id 51

mcast_src_ip 192.168.2.201

priority 100

advert_int 1

authentication {

auth_type PASS

auth_pass yangxingzhen.com

}

virtual_ipaddress {

192.168.2.188

}

track_script {

check_nginx

}

}

[root@k8s-nginx2 ~]# vim /etc/keepalived/keepalived.conf

! Configuration File for keepalived

global_defs {

notification_email {

675583110@qq.com

}

notification_email_from 675583110@qq.com

smtp_server 127.0.0.1

smtp_connect_timeout 30

router_id LVS_DEVEL

}

vrrp_script check_nginx {

script "/data/scripts/check_nginx.sh"

interval 2

weight 2

}

vrrp_instance VI_1 {

state MASTER

interface ens33

virtual_router_id 51

mcast_src_ip 192.168.2.202

priority 99

advert_int 1

authentication {

auth_type PASS

auth_pass yangxingzhen.com

}

virtual_ipaddress {

192.168.2.188

}

track_script {

check_nginx

}

}

3)创建Nginx状态检查脚本

[root@k8s-nginx1 ~]# mkdir -p /data/scripts

[root@k8s-nginx1 ~]# vim /data/scripts/check_nginx.sh

#!/bin/bash

#Date:2023-10-7 18:20:54

Num=$(ps -ef | grep -v grep | grep -c "nginx: master process")

if [ ${Num} -eq 0 ];then

systemctl stop keepalived

if [ $? -eq 0 ];then

echo "Keepalived Stop Success..."

fi

fi

[root@k8s-nginx2 ~]# mkdir -p /data/scripts

[root@k8s-nginx2 ~]# vim /data/scripts/check_nginx.sh

#!/bin/bash

#Date:2023-10-7 18:20:54

Num=$(ps -ef | grep -v grep | grep -c "nginx: master process")

if [ ${Num} -eq 0 ];then

systemctl stop keepalived

if [ $? -eq 0 ];then

echo "Keepalived Stop Success..."

fi

fi

4)授权脚本

[root@k8s-nginx1 ~]# chmod +x /data/scripts/check_nginx.sh

[root@k8s-nginx2 ~]# chmod +x /data/scripts/check_nginx.sh

5)启动Keepalived

[root@k8s-nginx1 ~]# systemctl start keepalived

[root@k8s-nginx1 ~]# systemctl enable keepalived

[root@k8s-nginx2 ~]# systemctl start keepalived

[root@k8s-nginx2 ~]# systemctl enable keepalived

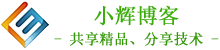

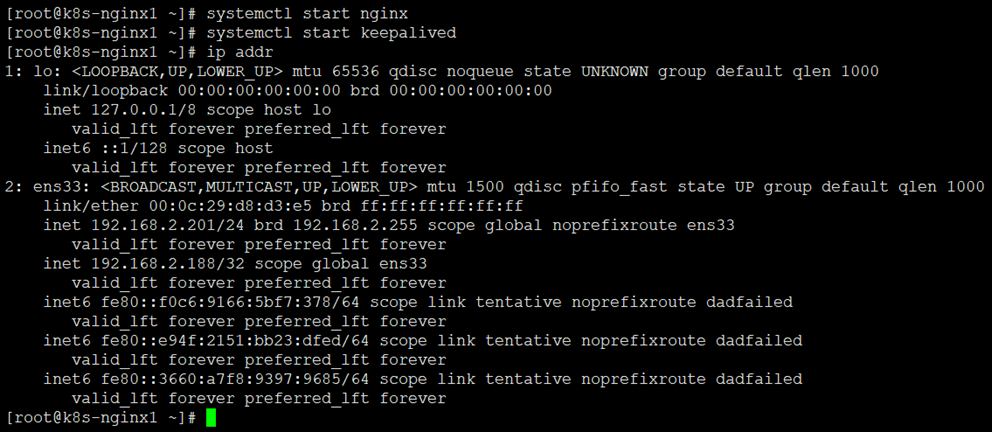

6)查看VIP是否生成

[root@k8s-nginx1 ~]# ip addr

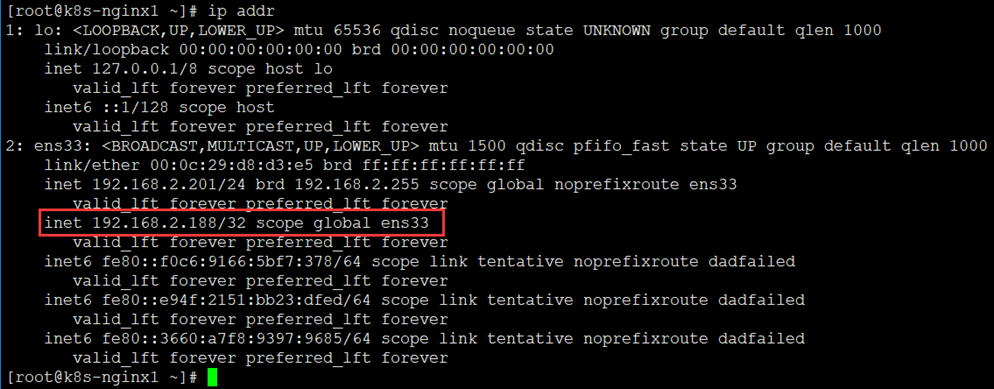

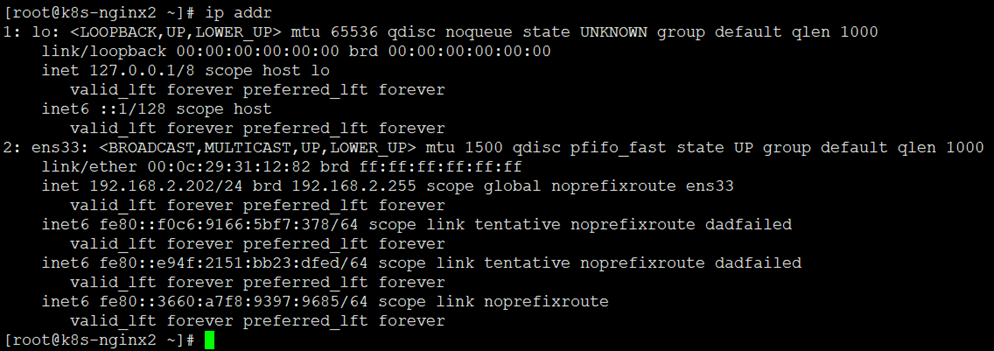

7)验证VIP是否正常漂移

# 此时虚拟IP在k8s-nginx1上,我们在k8s-nginx1中停止Nginx服务,再在k8s-nginx02上使用ip addr命令查看地址是否进行了漂移。

# 我们看到地址漂移到了k8s-nginx2节点上面。

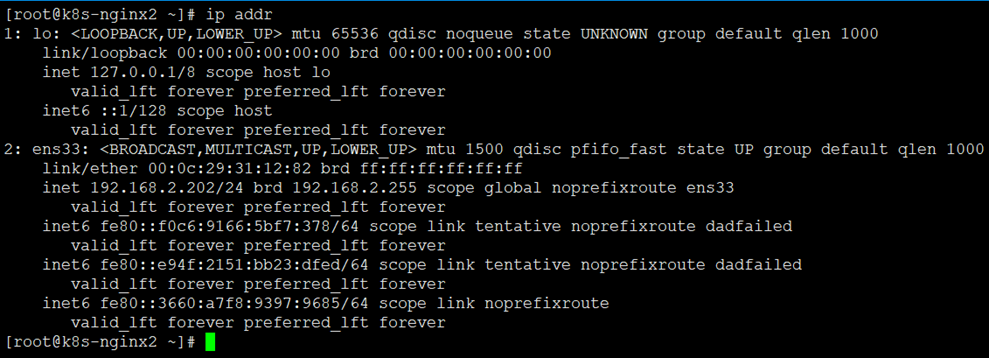

8)重启Nginx、Keepalived

# 此时可以看到漂移回k8s-nginx1,而k8s-nginx2上面是没有的,说明 Keepalived+Nginx高可用配置正常。

四、安装kubectl、kubelet、kubeadm

1、添加阿里kubernetes源

[root@k8s-master1 ~]# cat >/etc/yum.repos.d/kubernetes.repo <<EOF

[kubernetes]

name=Kubernetes

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64/

enabled=1

gpgcheck=0

repo_gpgcheck=0

gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOF

2、安装kubectl、kubelet、kubeadm

1)查看所有的可用版本

[root@k8s-master1 ~]# yum list kubelet --showduplicates |grep 1.27

kubelet.x86_64 1.27.0-0 kubernetes

kubelet.x86_64 1.27.1-0 kubernetes

kubelet.x86_64 1.27.2-0 kubernetes

kubelet.x86_64 1.27.3-0 kubernetes

kubelet.x86_64 1.27.4-0 kubernetes

kubelet.x86_64 1.27.5-0 kubernetes

kubelet.x86_64 1.27.6-0 kubernetes

2)这里安装当前最新版本1.27.6

[root@k8s-master1 ~]# yum -y install kubectl-1.27.6 kubelet-1.27.6 kubeadm-1.27.6

3)启动kubelet

[root@k8s-master1 ~]# systemctl enable kubelet

[root@k8s-master1 ~]# systemctl start kubelet

五、部署Kubernetes集群

1、初始化Kubernetes集群

1)查看k8s v1.27.6初始化所需要的镜像

[root@k8s-master1 ~]# kubeadm config images list --kubernetes-version=v1.27.6

registry.k8s.io/kube-apiserver:v1.27.6

registry.k8s.io/kube-controller-manager:v1.27.6

registry.k8s.io/kube-scheduler:v1.27.6

registry.k8s.io/kube-proxy:v1.27.6

registry.k8s.io/pause:3.9

registry.k8s.io/etcd:3.5.7-0

registry.k8s.io/coredns/coredns:v1.10.1

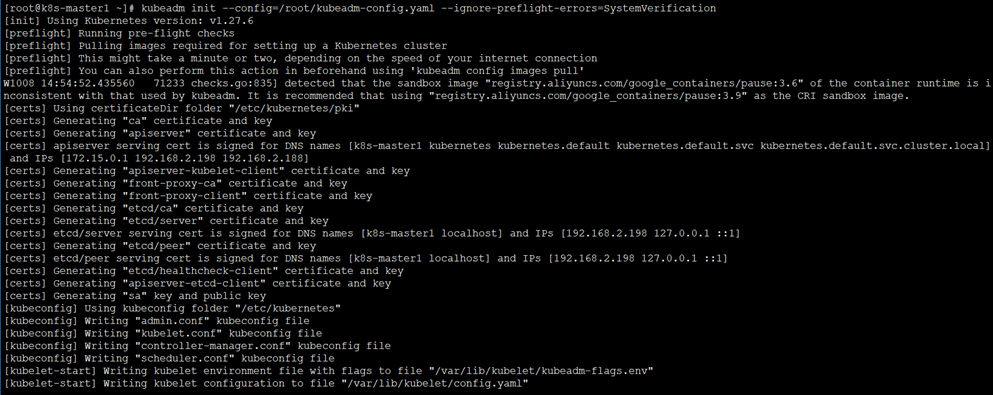

2)初始化

[root@k8s-master1 ~]# vim /root/kubeadm-config.yaml

apiVersion: kubeadm.k8s.io/v1beta3

kind: ClusterConfiguration

kubernetesVersion: v1.27.6

controlPlaneEndpoint: 192.168.2.188:6443

imageRepository: registry.aliyuncs.com/google_containers

networking:

podSubnet: 172.16.0.0/16

serviceSubnet: 172.15.0.0/16

---

apiVersion: kubeproxy.config.k8s.io/v1alpha1

kind: KubeProxyConfiguration

mode: ipvs

[root@k8s-master1 ~]# kubeadm init --config=/root/kubeadm-config.yaml --ignore-preflight-errors=SystemVerification

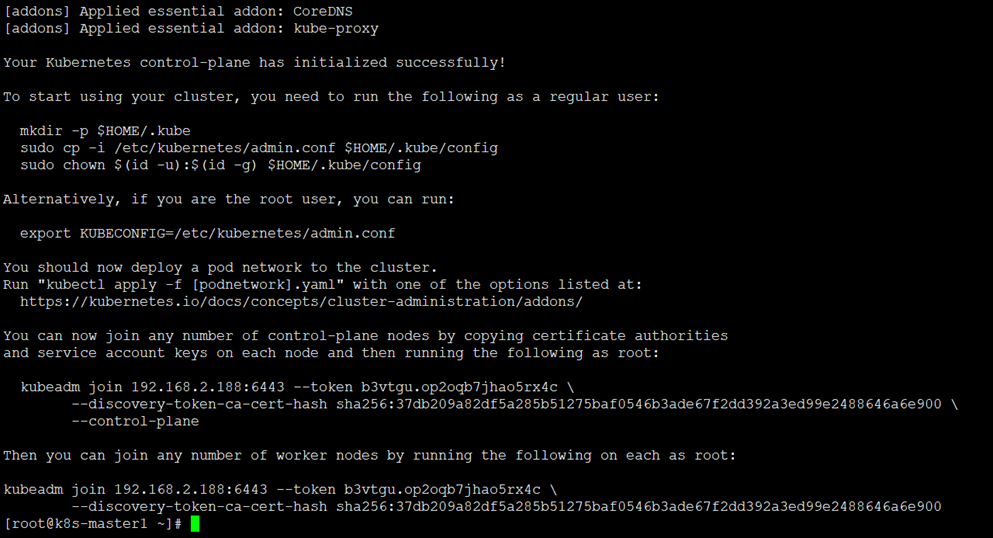

集群初始化成功后返回如下信息:

注:记录生成的最后部分内容,此内容需要在其它节点加入Kubernetes集群时执行。

2、根据提示创建kubectl

[root@k8s-master1 ~]# mkdir -p $HOME/.kube

[root@k8s-master1 ~]# sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

[root@k8s-master1 ~]# sudo chown $(id -u):$(id -g) $HOME/.kube/config

[root@k8s-master1 ~]# export KUBECONFIG=/etc/kubernetes/admin.conf

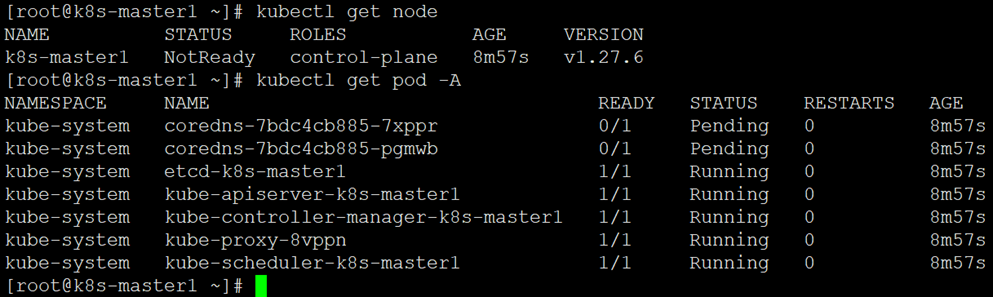

3、查看node节点和Pod

[root@k8s-master1 ~]# kubectl get node

[root@k8s-master1 ~]# kubectl get pod -A

注:node节点为NotReady,因为corednspod没有启动,缺少网络pod

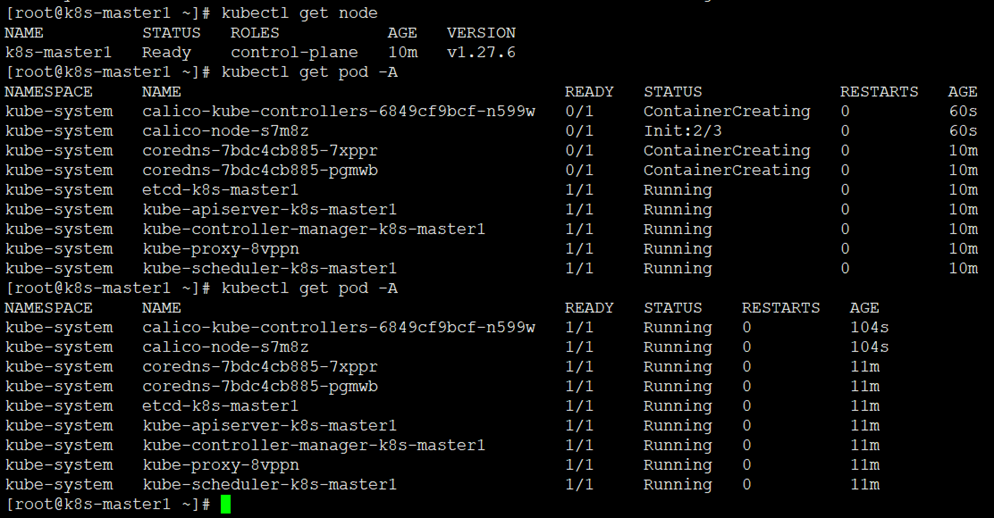

4、安装Pod网络插件calico(CNI)

[root@k8s-master1 ~]# kubectl apply -f https://docs.tigera.io/archive/v3.24/manifests/calico.yaml

poddisruptionbudget.policy/calico-kube-controllers created

serviceaccount/calico-kube-controllers created

serviceaccount/calico-node created

configmap/calico-config created

customresourcedefinition.apiextensions.k8s.io/bgpconfigurations.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/bgppeers.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/blockaffinities.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/caliconodestatuses.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/clusterinformations.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/felixconfigurations.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/globalnetworkpolicies.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/globalnetworksets.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/hostendpoints.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/ipamblocks.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/ipamconfigs.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/ipamhandles.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/ippools.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/ipreservations.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/kubecontrollersconfigurations.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/networkpolicies.crd.projectcalico.org created

customresourcedefinition.apiextensions.k8s.io/networksets.crd.projectcalico.org created

clusterrole.rbac.authorization.k8s.io/calico-kube-controllers created

clusterrole.rbac.authorization.k8s.io/calico-node created

clusterrolebinding.rbac.authorization.k8s.io/calico-kube-controllers created

clusterrolebinding.rbac.authorization.k8s.io/calico-node created

daemonset.apps/calico-node created

deployment.apps/calico-kube-controllers created

5、再次查看pod和node

[root@k8s-master1 ~]# kubectl get node

[root@k8s-master1 ~]# kubectl get pod -A

6、添加管理节点加入Kubernetes集群

# 将证书分配至其它master节点,把k8s-master1节点的证书拷贝到 k8s-master2、k8s-master3

[root@k8s-master1 ~]# ssh-keygen

[root@k8s-master1 ~]# ssh-copy-id root@k8s-master2

[root@k8s-master1 ~]# ssh k8s-master2 "cd /root && mkdir -p /etc/kubernetes/pki/etcd && mkdir -p ~/.kube/"

[root@k8s-master1 ~]# scp /etc/kubernetes/pki/ca.crt k8s-master2:/etc/kubernetes/pki/

[root@k8s-master1 ~]# scp /etc/kubernetes/pki/ca.key k8s-master2:/etc/kubernetes/pki/

[root@k8s-master1 ~]# scp /etc/kubernetes/pki/sa.key k8s-master2:/etc/kubernetes/pki/

[root@k8s-master1 ~]# scp /etc/kubernetes/pki/sa.pub k8s-master2:/etc/kubernetes/pki/

[root@k8s-master1 ~]# scp /etc/kubernetes/pki/front-proxy-ca.crt k8s-master2:/etc/kubernetes/pki/

[root@k8s-master1 ~]# scp /etc/kubernetes/pki/front-proxy-ca.key k8s-master2:/etc/kubernetes/pki/

[root@k8s-master1 ~]# scp /etc/kubernetes/pki/etcd/ca.crt k8s-master2:/etc/kubernetes/pki/etcd/

[root@k8s-master1 ~]# scp /etc/kubernetes/pki/etcd/ca.key k8s-master2:/etc/kubernetes/pki/etcd/

[root@k8s-master1 ~]# ssh-copy-id root@k8s-master3

[root@k8s-master1 ~]# ssh k8s-master3 "cd /root && mkdir -p /etc/kubernetes/pki/etcd && mkdir -p ~/.kube/"

[root@k8s-master1 ~]# scp /etc/kubernetes/pki/ca.crt k8s-master3:/etc/kubernetes/pki/

[root@k8s-master1 ~]# scp /etc/kubernetes/pki/ca.key k8s-master3:/etc/kubernetes/pki/

[root@k8s-master1 ~]# scp /etc/kubernetes/pki/sa.key k8s-master3:/etc/kubernetes/pki/

[root@k8s-master1 ~]# scp /etc/kubernetes/pki/sa.pub k8s-master3:/etc/kubernetes/pki/

[root@k8s-master1 ~]# scp /etc/kubernetes/pki/front-proxy-ca.crt k8s-master3:/etc/kubernetes/pki/

[root@k8s-master1 ~]# scp /etc/kubernetes/pki/front-proxy-ca.key k8s-master3:/etc/kubernetes/pki/

[root@k8s-master1 ~]# scp /etc/kubernetes/pki/etcd/ca.crt k8s-master3:/etc/kubernetes/pki/etcd/

[root@k8s-master1 ~]# scp /etc/kubernetes/pki/etcd/ca.key k8s-master3:/etc/kubernetes/pki/etcd/

7、k8s-master1上查看加入节点的命令

[root@k8s-master1 ~]# kubeadm token create --print-join-command

kubeadm join 192.168.2.188:6443 --token yk97lr.fq9up0kn2tslg2qt --discovery-token-ca-cert-hash sha256:37db209a82df5a285b51275baf0546b3ade67f2dd392a3ed99e2488646a6e900

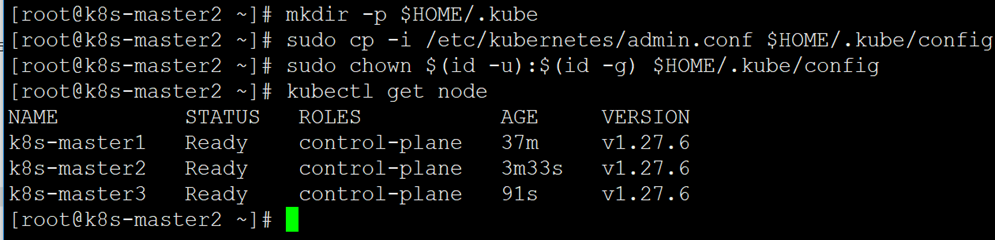

# 添加k8s-master2控制节点

[root@k8s-master2 ~]# kubeadm join 192.168.2.188:6443 --token b3vtgu.op2oqb7jhao5rx4c --discovery-token-ca-cert-hash sha256:37db209a82df5a285b51275baf0546b3ade67f2dd392a3ed99e2488646a6e900 --control-plane

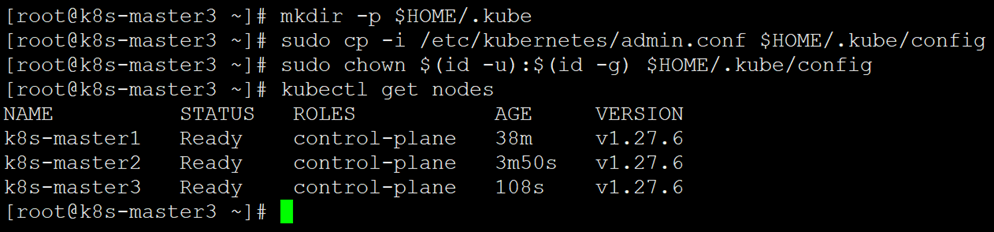

# 添加k8s-master3控制节点

[root@k8s-master3 ~]# kubeadm join 192.168.2.188:6443 --token b3vtgu.op2oqb7jhao5rx4c --discovery-token-ca-cert-hash sha256:37db209a82df5a285b51275baf0546b3ade67f2dd392a3ed99e2488646a6e900 --control-plane

# 查看集群状态

[root@k8s-master2 ~]# mkdir -p $HOME/.kube

[root@k8s-master2 ~]# sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

[root@k8s-master2 ~]# sudo chown $(id -u):$(id -g) $HOME/.kube/config

[root@k8s-master2 ~]# kubectl get node

[root@k8s-master3 ~]# mkdir -p $HOME/.kube

[root@k8s-master3 ~]# sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

[root@k8s-master3 ~]# sudo chown $(id -u):$(id -g) $HOME/.kube/config

[root@k8s-master3 ~]# kubectl get nodes

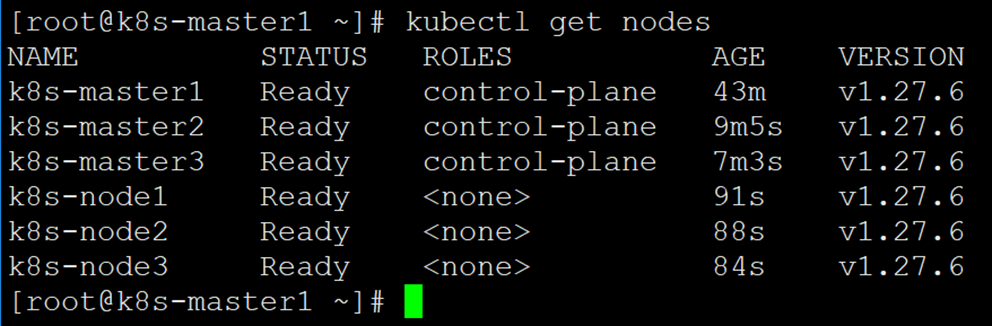

如上图可以看到k8s-master2、k8s-master3已经加入到k8s集群中。

8、添加工作节点加入Kubernetes集群

[root@k8s-node1 ~]# kubeadm join 192.168.2.188:6443 --token b3vtgu.op2oqb7jhao5rx4c --discovery-token-ca-cert-hash sha256:37db209a82df5a285b51275baf0546b3ade67f2dd392a3ed99e2488646a6e900

[root@k8s-node2 ~]# kubeadm join 192.168.2.188:6443 --token b3vtgu.op2oqb7jhao5rx4c --discovery-token-ca-cert-hash sha256:37db209a82df5a285b51275baf0546b3ade67f2dd392a3ed99e2488646a6e900

[root@k8s-node3 ~]# kubeadm join 192.168.2.188:6443 --token b3vtgu.op2oqb7jhao5rx4c --discovery-token-ca-cert-hash sha256:37db209a82df5a285b51275baf0546b3ade67f2dd392a3ed99e2488646a6e900

9、再次查看Node

[root@k8s-master1 ~]# kubectl get nodes

10、kubectl命令补全功能

[root@k8s-master1 ~]# yum -y install bash-completion

[root@k8s-master1 ~]# echo "source <(kubectl completion bash)" >> /etc/profile

[root@k8s-master1 ~]# source /etc/profile

[root@k8s-master2 ~]# yum -y install bash-completion

[root@k8s-master2 ~]# echo "source <(kubectl completion bash)" >> /etc/profile

[root@k8s-master2 ~]# source /etc/profile

[root@k8s-master3 ~]# yum -y install bash-completion

[root@k8s-master3 ~]# echo "source <(kubectl completion bash)" >> /etc/profile

[root@k8s-master3 ~]# source /etc/profile

六、安装kubernetes-dashboard

注:官方部署dashboard的服务没使用nodeport,将yaml文件下载到本地,在service里添加nodeport。

1、下载配置文件

[root@k8s-master1 ~]# wget https://raw.githubusercontent.com/kubernetes/dashboard/v2.7.0/aio/deploy/recommended.yaml

2、修改配置文件

[root@k8s-master1 ~]# vim recommended.yaml

# 需要修改的内容如下所示

kind: Service

apiVersion: v1

metadata:

labels:

k8s-app: kubernetes-dashboard

name: kubernetes-dashboard

namespace: kubernetes-dashboard

spec:

type: NodePort # 增加内容

ports:

- port: 443

targetPort: 8443

nodePort: 30000 # 增加内容

selector:

k8s-app: kubernetes-dashboard

[root@k8s-master1 ~]# kubectl apply -f recommended.yaml

namespace/kubernetes-dashboard created

serviceaccount/kubernetes-dashboard created

service/kubernetes-dashboard created

secret/kubernetes-dashboard-certs created

secret/kubernetes-dashboard-csrf created

secret/kubernetes-dashboard-key-holder created

configmap/kubernetes-dashboard-settings created

role.rbac.authorization.k8s.io/kubernetes-dashboard created

clusterrole.rbac.authorization.k8s.io/kubernetes-dashboard created

rolebinding.rbac.authorization.k8s.io/kubernetes-dashboard created

clusterrolebinding.rbac.authorization.k8s.io/kubernetes-dashboard created

deployment.apps/kubernetes-dashboard created

service/dashboard-metrics-scraper created

deployment.apps/dashboard-metrics-scraper created

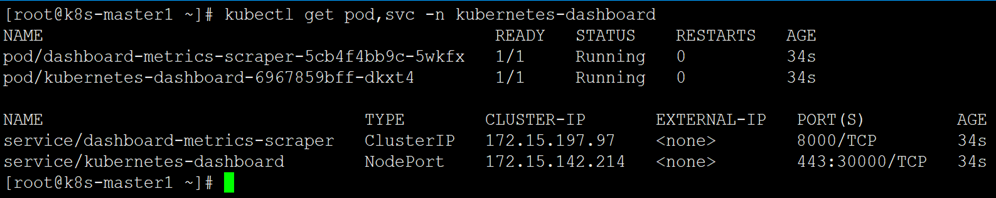

3、查看pod和service

[root@k8s-master1 ~]# kubectl get pod,svc -n kubernetes-dashboard

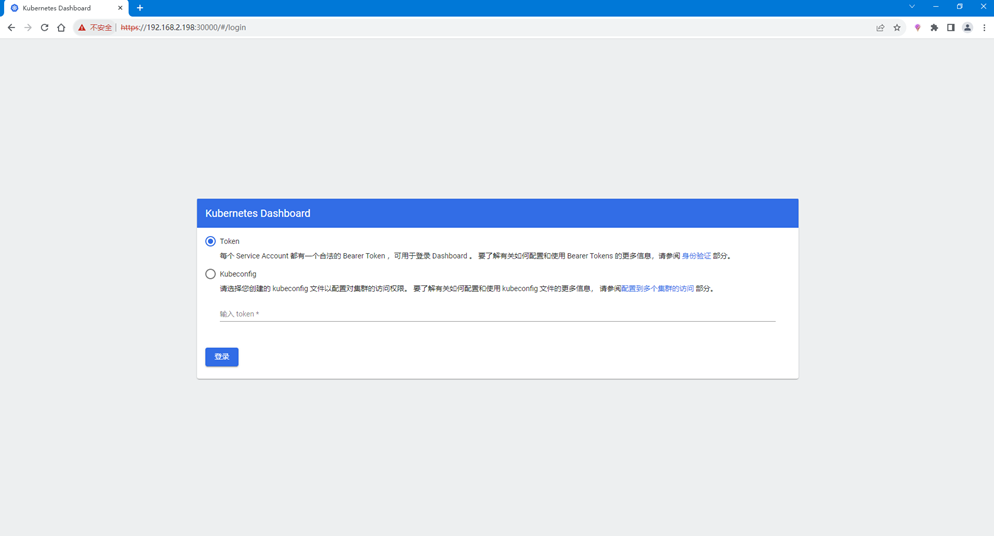

4、访问Dashboard页面

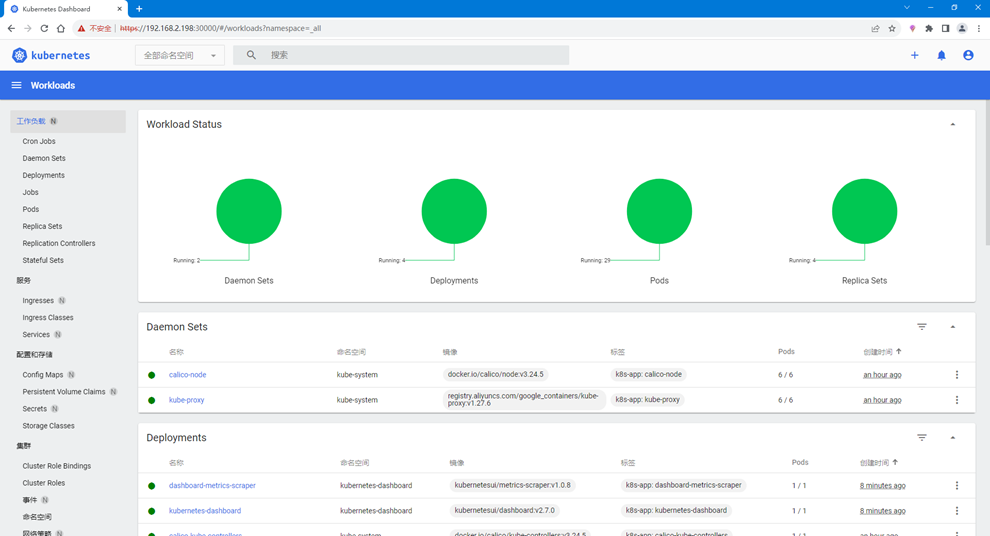

# 浏览器输入https://192.168.2.198:30000/,如下图所示

5、创建用户

[root@k8s-master1 ~]# vim dashboard-admin.yaml

apiVersion: v1

kind: ServiceAccount

metadata:

name: admin

namespace: kubernetes-dashboard

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: admin

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: cluster-admin

subjects:

- kind: ServiceAccount

name: admin

namespace: kubernetes-dashboard

---

apiVersion: v1

kind: Secret

metadata:

name: kubernetes-dashboard-admin

namespace: kubernetes-dashboard

annotations:

kubernetes.io/service-account.name: "admin"

type: kubernetes.io/service-account-token

[root@k8s-master1 ~]# kubectl apply -f dashboard-admin.yaml

serviceaccount/admin created

clusterrolebinding.rbac.authorization.k8s.io/admin created

secret/kubernetes-dashboard-admin created

6、创建Token

[root@k8s-master1 ~]# kubectl -n kubernetes-dashboard create token admin

eyJhbGciOiJSUzI1NiIsImtpZCI6IkZfNllvSkVRWGduR0kxYk5DM3M1VnVFczFPRWh6WE03RXJldFVrVWdfUGMifQ.eyJhdWQiOlsiaHR0cHM6Ly9rdWJlcm5ldGVzLmRlZmF1bHQuc3ZjLmNsdXN0ZXIubG9jYWwiXSwiZXhwIjoxNjk2NzU3NDQ3LCJpYXQiOjE2OTY3NTM4NDcsImlzcyI6Imh0dHBzOi8va3ViZXJuZXRlcy5kZWZhdWx0LnN2Yy5jbHVzdGVyLmxvY2FsIiwia3ViZXJuZXRlcy5pbyI6eyJuYW1lc3BhY2UiOiJrdWJlcm5ldGVzLWRhc2hib2FyZCIsInNlcnZpY2VhY2NvdW50Ijp7Im5hbWUiOiJhZG1pbiIsInVpZCI6ImNiMzhhYWNlLTI4YmMtNDVlOC05Mzc4LTI0MGY0YjQzYjdlNCJ9fSwibmJmIjoxNjk2NzUzODQ3LCJzdWIiOiJzeXN0ZW06c2VydmljZWFjY291bnQ6a3ViZXJuZXRlcy1kYXNoYm9hcmQ6YWRtaW4ifQ.bUWhrBySvHRL1IUkioaFOL_01btNenEzPwNFhKMgPMA8tttSE3dsp5sOpx60EdM4Iqk8TjfuLe83atww3GSRhA-k8xGEmMQBRsnyMGQ8mEfciBzv4hQlR7TPCtpVvB2PaAOXfghUsv6UOTPUb0Y7AJssQ78O79E9dETA1yRcwK02r6fTsLSYzR1n2hTq_kAfGqIFpoL4-7pIqgS98WTEU8S5zVTpBKq3VV7WpZzsW4KlzqLx6fGDsu4WNYwU407GzhCigkAiKx-y1ab3HiuaFIvpGDbtPnMWk_29PjV27DZkSfk5z5aS_zXYvi4s6iKG0xufPxe7BR2Dc-jiSJ2b1A

7、获取Token

[root@k8s-master1 ~]# Token=$(kubectl -n kubernetes-dashboard get secret |awk '/kubernetes-dashboard-admin/ {print $1}')

[root@k8s-master1 ~]# kubectl describe secrets -n kubernetes-dashboard ${Token} |grep token |awk 'NR==NF {print $2}'

eyJhbGciOiJSUzI1NiIsImtpZCI6IkZfNllvSkVRWGduR0kxYk5DM3M1VnVFczFPRWh6WE03RXJldFVrVWdfUGMifQ.eyJpc3MiOiJrdWJlcm5ldGVzL3NlcnZpY2VhY2NvdW50Iiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9uYW1lc3BhY2UiOiJrdWJlcm5ldGVzLWRhc2hib2FyZCIsImt1YmVybmV0ZXMuaW8vc2VydmljZWFjY291bnQvc2VjcmV0Lm5hbWUiOiJrdWJlcm5ldGVzLWRhc2hib2FyZC1hZG1pbiIsImt1YmVybmV0ZXMuaW8vc2VydmljZWFjY291bnQvc2VydmljZS1hY2NvdW50Lm5hbWUiOiJhZG1pbiIsImt1YmVybmV0ZXMuaW8vc2VydmljZWFjY291bnQvc2VydmljZS1hY2NvdW50LnVpZCI6ImNiMzhhYWNlLTI4YmMtNDVlOC05Mzc4LTI0MGY0YjQzYjdlNCIsInN1YiI6InN5c3RlbTpzZXJ2aWNlYWNjb3VudDprdWJlcm5ldGVzLWRhc2hib2FyZDphZG1pbiJ9.LAH2qRM3fUnRX6tzHGS0YNcjalmjO-xUrP50jK4HlXPjk9OLwUSzTjYbcA9PysoxwJUDiGAkGkg4DxSHZvkl-Brdqw6Ip6RtwWjuYQpQahRnJKPtuLufgTV0ziy1X5jsGbkzQcxdMTJIgAEeqTEZaUReurpfMKr9h5OCrZFQaWUKpe-jVAKwE7RFd6QitSA5WWD8be1DmtoIIC-ok1RXH-tG7Q10WUcn-vCkcbFQWKvA7Xkce2Es2RrJd8slENHyG3EefCFlZNQwoXJvOg-DXAbT3JhzTeI962aIYixX2iJwC62AUbAbZH8FLqwrCIOfpOwre4y4qrpNrLMtdCK9xw

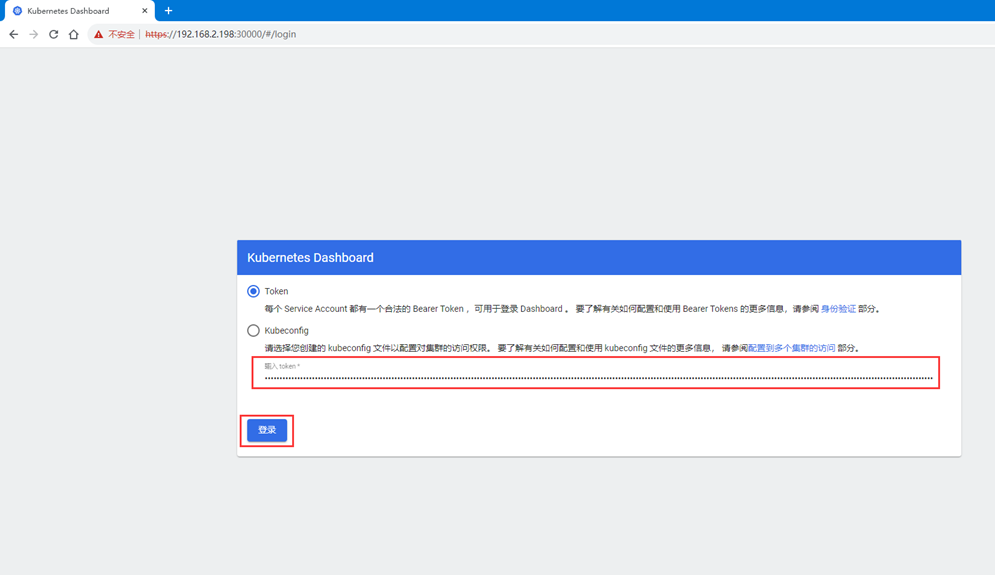

8、使用Token登录Dashboard

注:登录后如果没有namespace可选,并且提示找不到资源,那么就是权限不足问题导致,可通过以下命令授权

[root@k8s-master1 ~]# kubectl create clusterrolebinding serviceaccount-cluster-admin --clusterrole=cluster-admin --user=system:serviceaccount:kubernetes-dashboard:kubernetes-dashboard

若文章图片、下载链接等信息出错,请在评论区留言反馈,博主将第一时间更新!如本文“对您有用”,欢迎随意打赏,谢谢!

评论